"It is not the strongest of the species that survives, nor the most intelligent, but the one most responsive to change." - Leon C. Megginson

Have you ever thought about how your body regulates its temperature? You do not consciously tell your sweat glands to activate when it gets warm. Your autonomic nervous system handles the process for you.

Back in 2003, Jeffrey Kephart and David Chess from IBM published a landmark paper called "The Vision of Autonomic Computing." They observed that computing systems were becoming too complex for human administrators to install, configure, tune, and maintain. They warned that this complexity would soon outpace human ability to manage it. Their proposed solution was autonomic computing. They envisioned software systems that manage themselves according to high-level goals, much like the human body regulates itself.

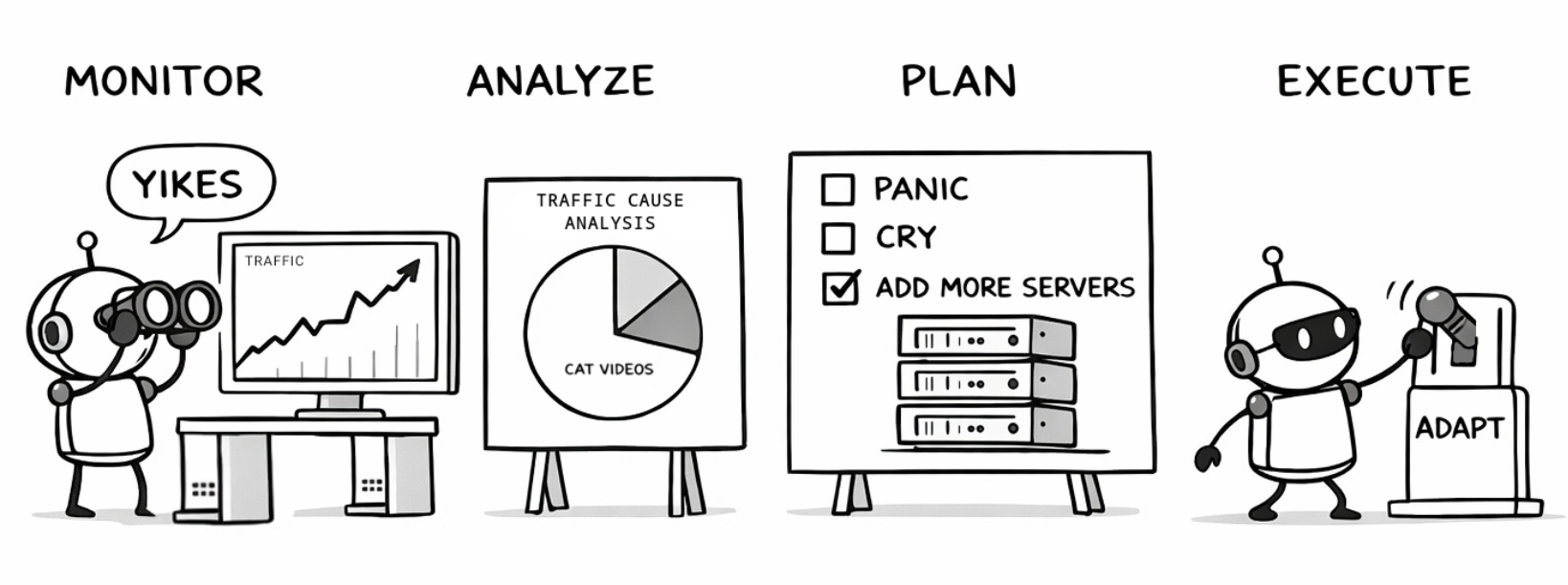

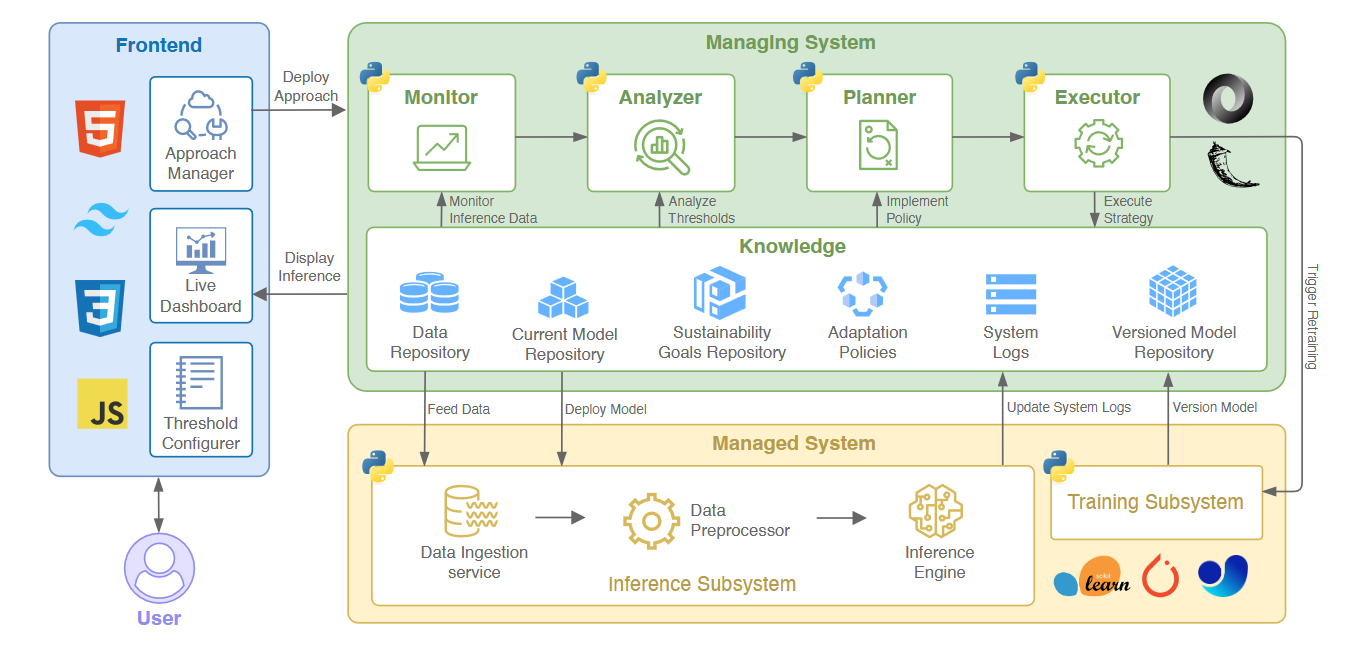

This vision popularized the paradigm of self adaptation. In a self-adaptive system, software monitors its own behavior and its surrounding environment. When it detects a problem, it analyzes the situation, plans a response, and executes a fix. We call this the MAPE-K loop (Monitor, Analyze, Plan, Execute, over a shared Knowledge base). IBM outlined four key properties for these systems. They must handle self-configuration, self-optimization, self-healing, and self-protection. This is a practical concept that fundamentally changes how we build software. We no longer write static code that fails when the world changes. We write systems that respond to change.

Today, we face a new crisis. Machine learning models are everywhere. They consume large amounts of energy and computational resources. Training and running these models costs money, harms the environment, and creates maintenance challenges. We need our systems to be sustainable.

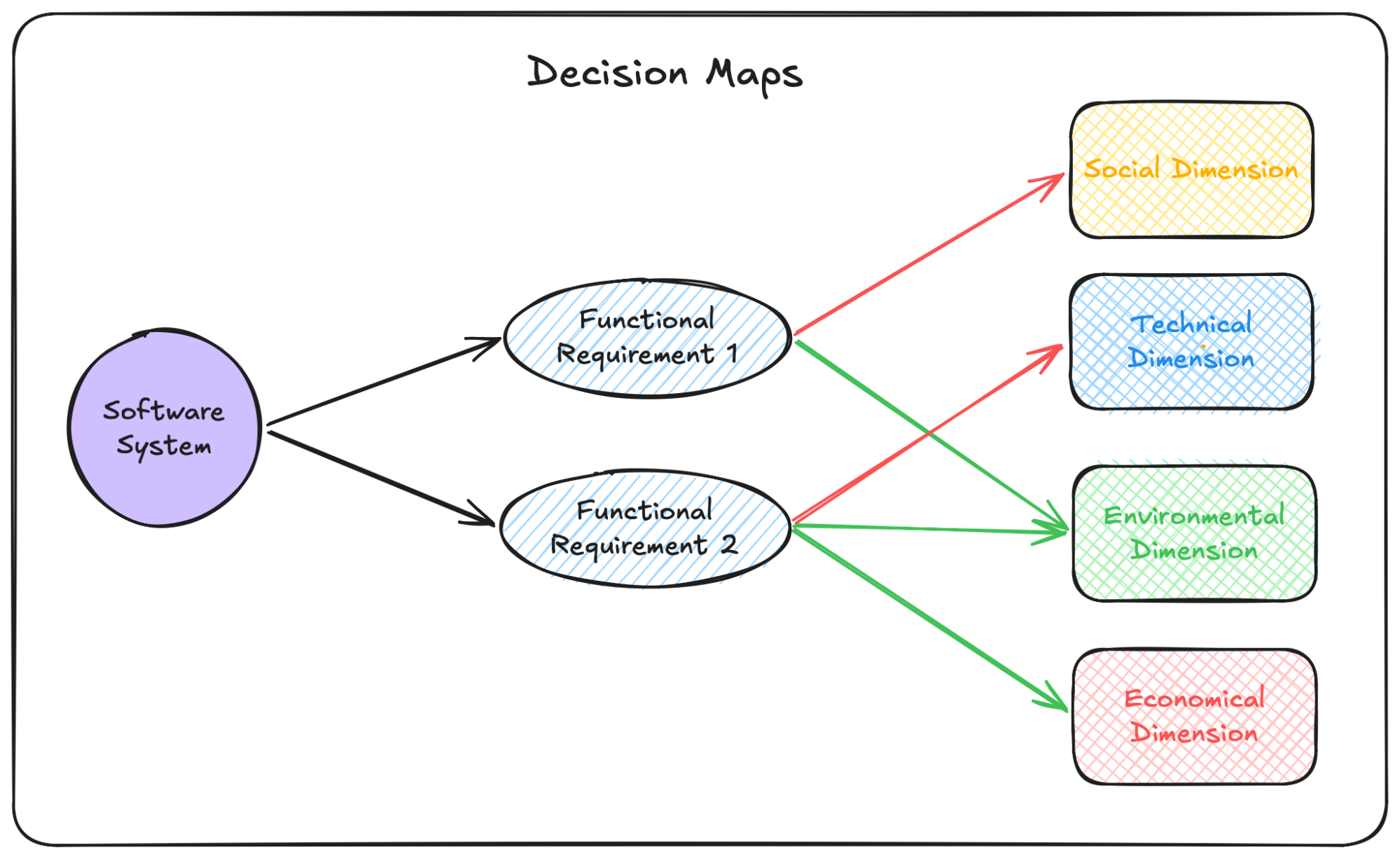

Sustainability in software involves four distinct dimensions. The environmental dimension focuses on energy use and carbon footprint. The economic dimension looks at operational costs. The technical dimension deals with accuracy and maintainability. Finally, the social dimension covers user trust and societal impact.

Design-Time Boundaries with Decision Maps

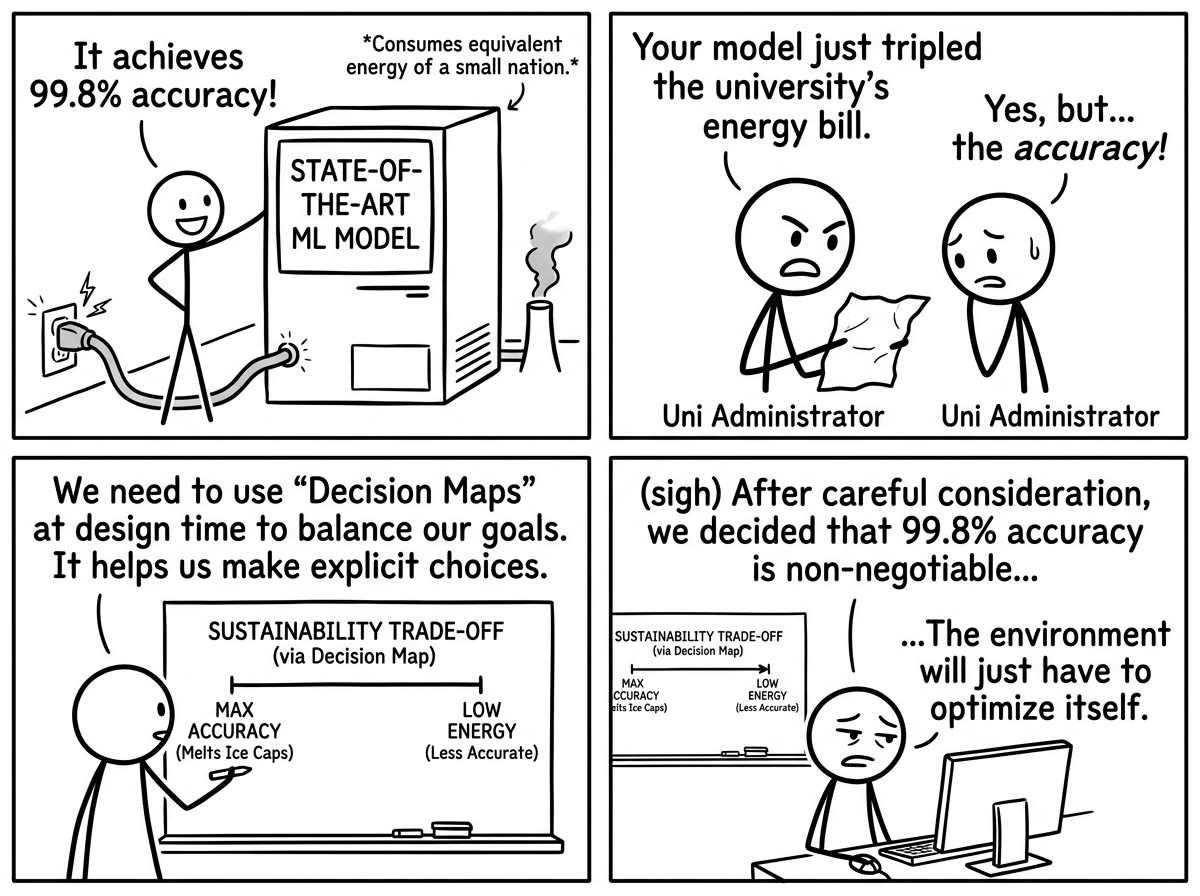

How do we build a system that balances all four dimensions? The authors of Expressing the adaptation intent as a sustainability goal suggest using Decision Maps. At design time, architects use these maps to lay out system features and link them to the four sustainability dimensions.

For example, retraining a machine learning model might improve technical sustainability by increasing prediction accuracy. However, that same action hurts environmental sustainability by increasing energy consumption. A Decision Map helps developers set explicit boundaries. It defines exactly how much accuracy the team is willing to trade for energy savings. It makes the adaptation intent clear from the start.

Decision Maps are useful for planning. However, they cannot stop heavy ML models on a server from drawing too much power at runtime. We need a way to take those design-time boundaries and enforce them while the machine learning system is actually running.

This is where our architectural approach, HarmonE steps in.

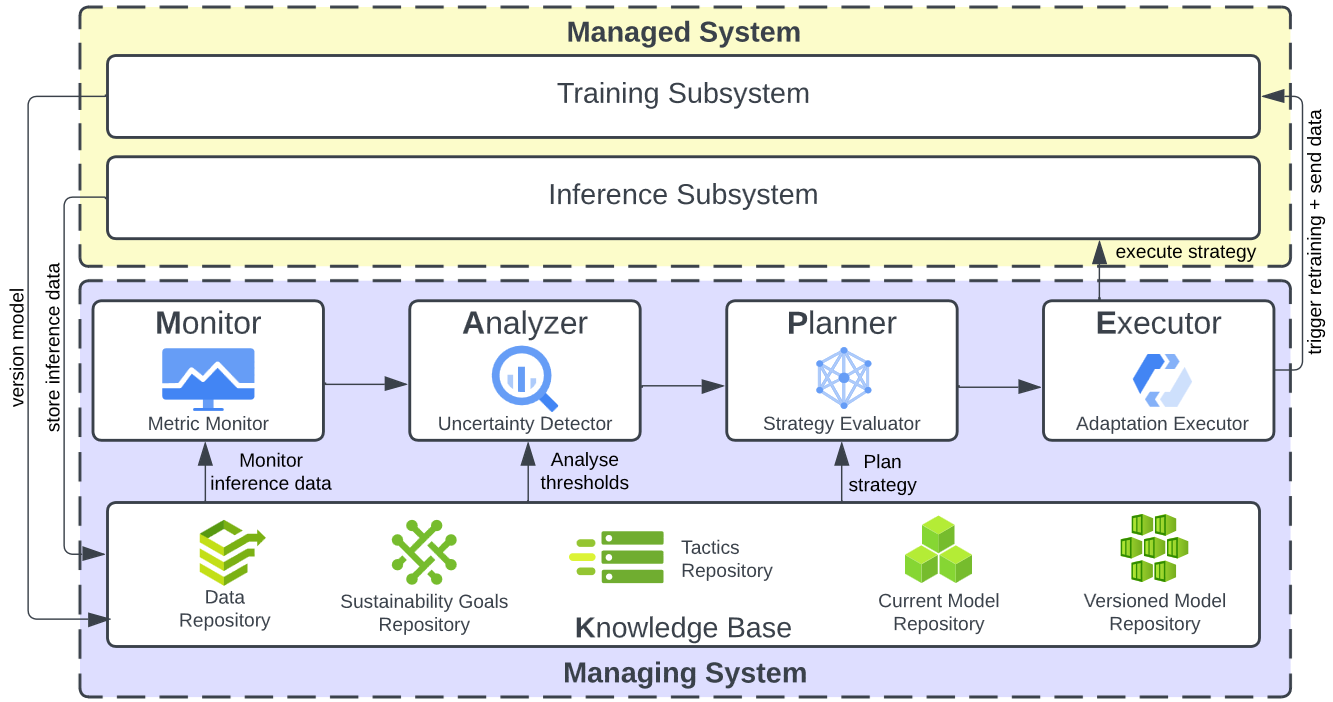

HarmonE bridges the gap between design-time sustainability goals and runtime machine learning operations. It takes the boundaries defined in a Decision Map and feeds them directly into a self-adaptive MAPE-K loop.

From Monitoring to Adaptation

Consider an artificial intelligence system predicting traffic flow. The Monitor component in HarmonE continuously observes the system in real time. It gathers live data on prediction accuracy, tracks the exact energy being consumed by the hardware, and watches for distribution shifts in the incoming data. All these metrics are passed directly to the Analyzer component. The Analyzer evaluates this live telemetry against the acceptable boundaries you set at design time using your Decision Map. As long as the system operates within those sustainable limits, HarmonE leaves the pipeline alone.

If a sudden disruption causes the model to lose accuracy, or if the server draws too much power, the Analyzer flags a violation. The Planner component then steps in to select the best adaptation tactic. It might decide to switch the active model to a lighter, more energy-efficient version from its current repository. However, if the incoming data has changed fundamentally, the system might need to adapt to the new trend.

Before wasting energy on a computationally expensive retraining cycle, the Planner checks a feature called the Versioned Model Repository. This repository saves previously trained models alongside the specific data distributions they learned from. If the new data shift matches a historical pattern, perhaps a recurring seasonal traffic jam, the system simply reuses the archived model. This prevents redundant training and saves a massive amount of energy.

If no historical match exists, the Planner schedules a full retraining cycle. Finally, the Executor component takes over to enact the plan. The Executor communicates directly with the underlying machine learning pipeline to swap out active models or trigger the training subsystem. Through this complete loop, HarmonE dynamically balances short-term predictive performance with long-term sustainability. It makes these complex trade-offs on the fly without needing a human administrator to intervene.

Making It Practical with Harmonica

Reading about software architecture is one thing. Testing it is another.

We wanted to make it easy for anyone to experiment with these concepts. If you want to test how self adaptation can improve sustainability, building a custom monitoring pipeline from scratch is a high barrier to entry. To solve this, we created Harmonica, our self-adaptation exemplar.

An exemplar is a ready-to-use software tool designed for experimentation. We built Harmonica so researchers and developers can download it, install it, and start testing their own sustainability ideas immediately. It wraps around your existing machine learning pipelines without requiring you to rewrite your training or inference code.

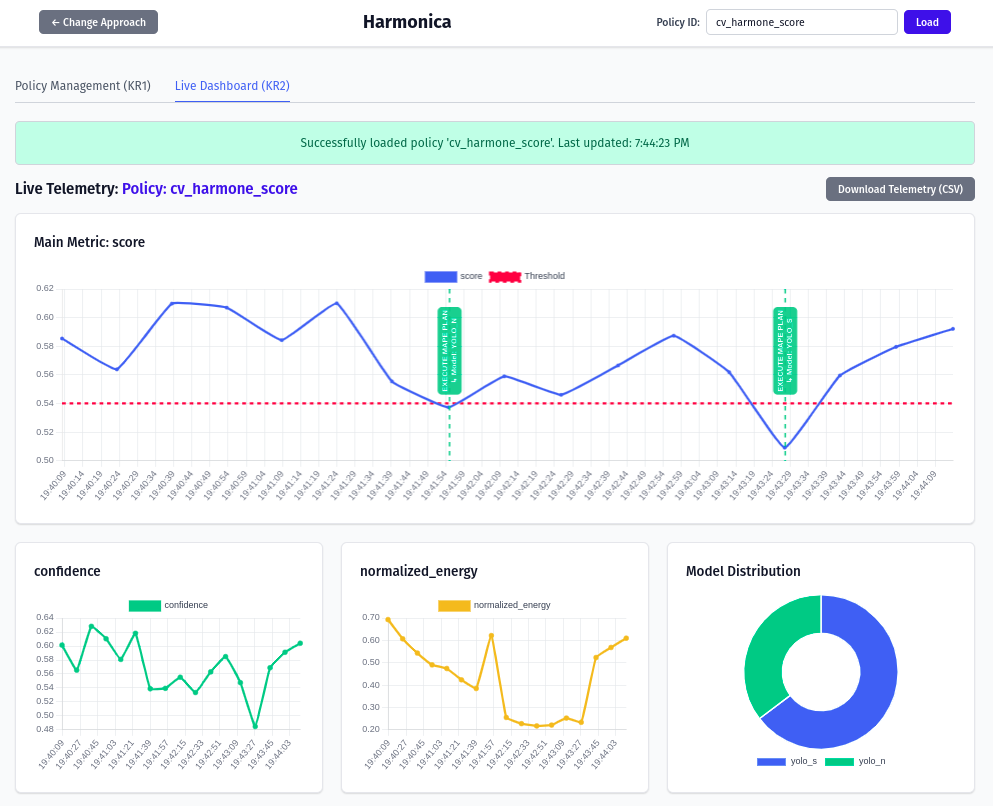

Harmonica comes with a lightweight web interface. You can use the dashboard to set adaptation boundaries, pick preferred tactics, and watch the system adapt. We included built-in case studies for both time-series regression (like traffic prediction) and computer vision (like object detection using YOLO models).

You can upload your own datasets, plug in your own models, and define custom policies using basic configuration files. The live dashboard shows you exactly how much energy your models are consuming. It also shows you when the system decides to switch models or retrain. For deeper research, it provides downloadable telemetry and logs for offline analysis.

We invite you to visit our open-source repository, pull the code, and try it out. Experiment with different thresholds. See what happens when you prioritize energy savings over raw accuracy in a computer vision task. Test how your own self-adaptation strategies perform when forced to consider economic and environmental costs.

Our computing systems will only continue to grow in scale. If we want them to remain viable, we need to teach them how to adapt. With approaches like HarmonE, we can build software that is smart, responsive, and truly sustainable.